AI Reasoning for Document Analysis

AI adoption in enterprise environments faces a critical challenge: trust. Despite the impressive capabilities of modern Large Language Models (LLMs), organizations struggle with a fundamental question - can we trust AI-generated results enough to act on them without extensive verification?

At Docflo, we've observed this pattern repeatedly. Users would receive AI-generated insights or extracted data, but hesitate to move forward without manually double-checking every detail. This hesitation wasn't born from skepticism about AI capabilities, but from a lack of understanding about how the AI reached its conclusions.

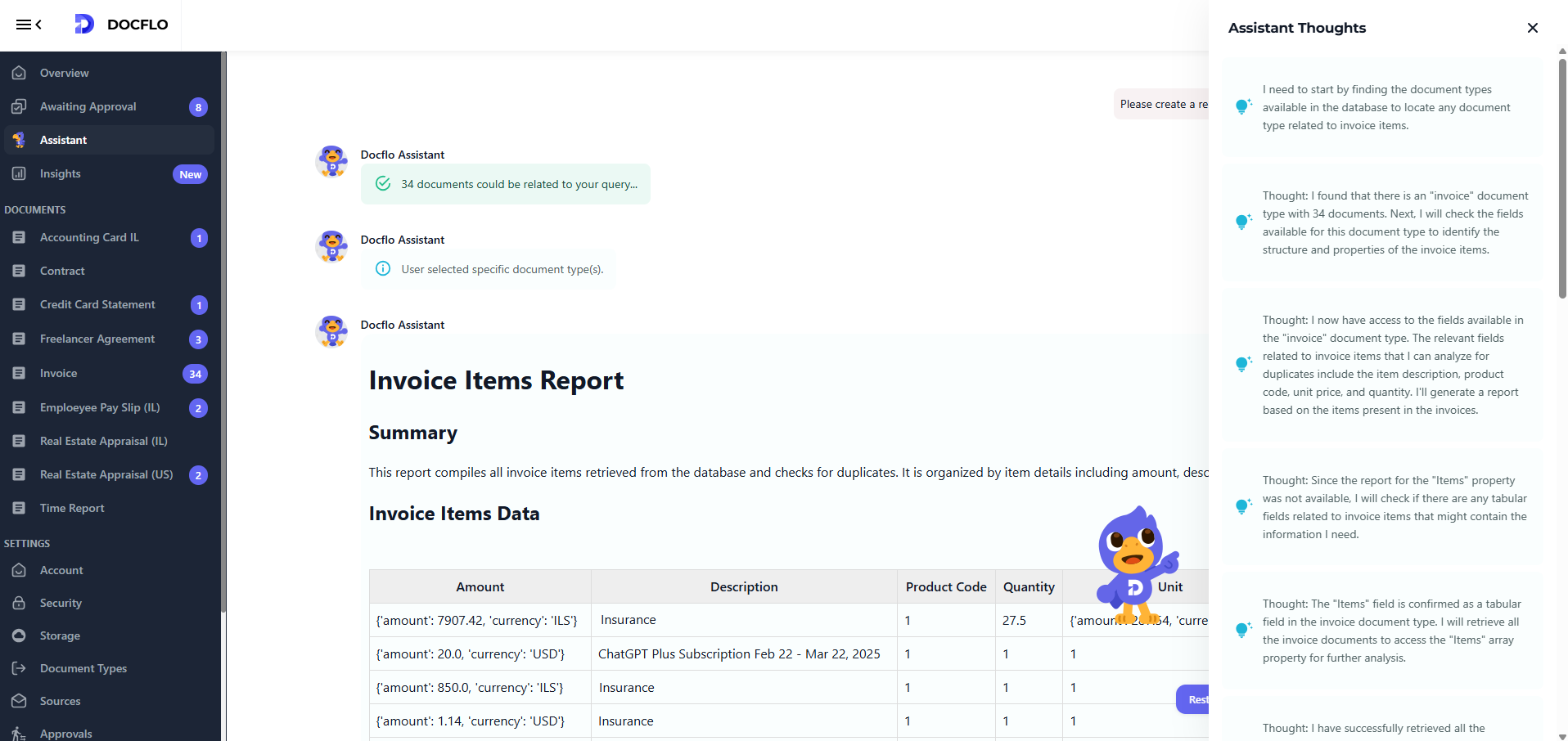

So after some real human thoughts, we added this in our Assistant:

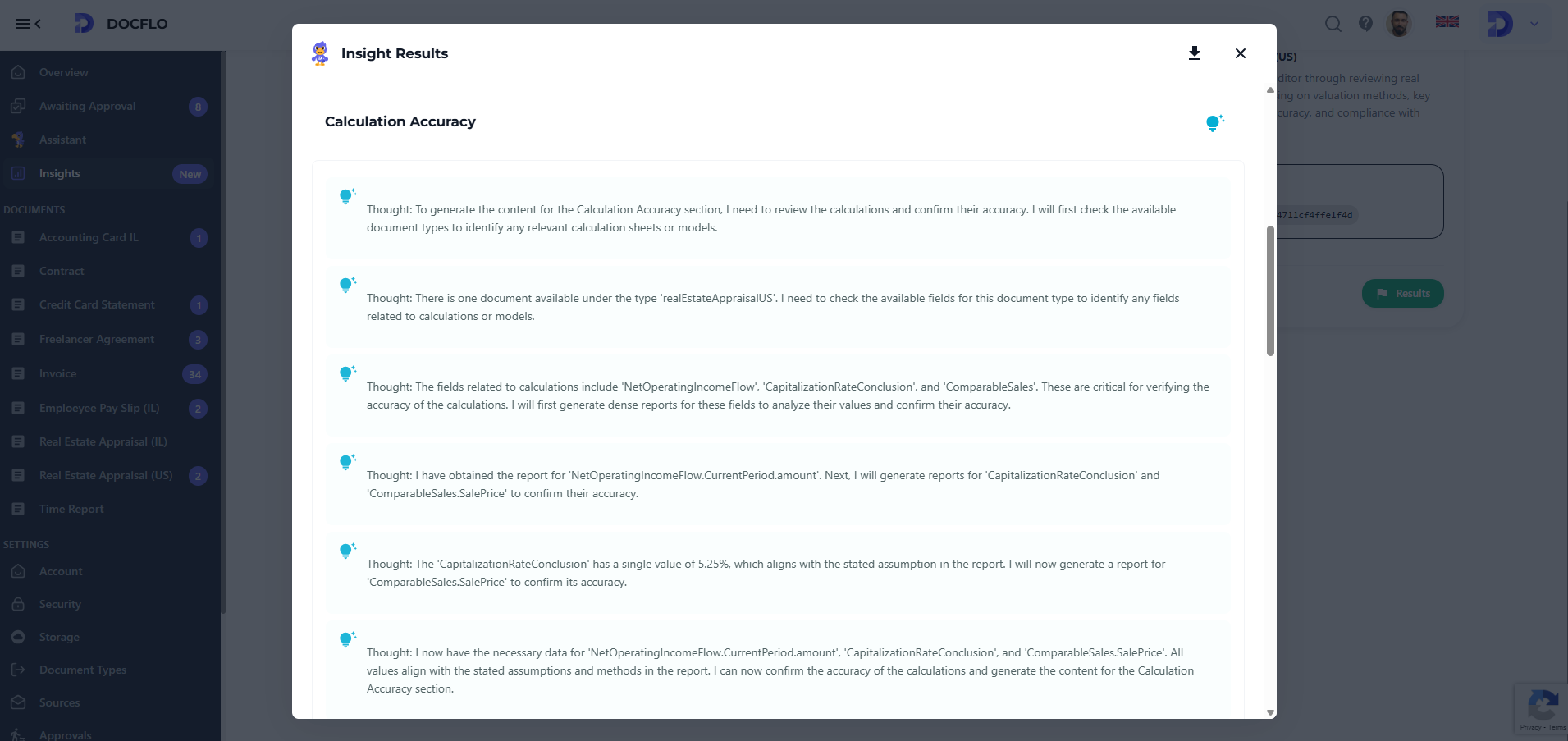

We've also implemented a comprehensive thought tracing across our agent execution. For each of an agent you will find an explanation of the result:

Following these additions, we've noticed document processing & analysis is now becoming much more "secure". People trust the results and are less afraid of LLM hallucinations. They research the reasoning and results only when they are not confident about the results.